The OpenWorldLib Framework provides orientation on how to define World Models and what they must be able to do.

In AI, a concept is increasingly coming into the spotlight: World Models. Although there is great progress in this area, there has been a lack of a clear, unified definition and a standardized technical foundation. To better categorize this, OpenWorldLib was developed: a comprehensive and standardized inference framework for advanced World Models (Original title: “OpenWorldLib: A Unified Codebase and Definition of Advanced World Models”).

Below we take a detailed look at the exact definition of these models, how they differ from other technologies, the architecture of the OpenWorldLib framework, the experimental setup, and a concluding assessment of future developments.

The Approach: How are World Models actually defined?

The term “World Model” is often used very differently in research, which has led to considerable confusion. OpenWorldLib starts here and provides a precise definition based on the core goal of these systems: the ability to continuously learn from the real world and interact with it. A World Model is therefore defined as a model or framework centered on the perception-based creation of internal representations. It must obligatorily be equipped with capabilities for action-based simulation and a long-term memory to understand and predict the dynamics of a complex world.

A World Model is not tied to a specific architecture or an isolated task but rather represents an overarching level of performance. Mathematically and conceptually, World Models traditionally rely on three fundamental probability distributions:

- The State Transition Model, which predicts the next latent state based on the current state and the action performed. This state integrates an intrinsic memory to manage long-term dependencies in complex tasks.

- The Observation Model, which derives sensory perception (e.g., visual, auditory, or proprioceptive) from the respective state.

- The Reward Model, which calculates the feedback or reward from the interaction between the actions and the environment.

What belongs to World Models according to this definition

Based on the strict definition of OpenWorldLib, specific tasks can be identified that clearly fall into the realm of World Models because they require profound understanding and interaction with the physical world.

Interactive Video Generation

Predicting the next frame (Next-Frame Prediction) is considered one of the best-known paradigms in World Model research. It involves the active, interactive creation of video sequences based on user input and environmental dynamics. Examples include early regressive models like Matrix-Game-2 or newer, diffusion model-based approaches like Lingbot-World, Hunyuan-GameCraft, YUME-1.5, Cosmos, and WoW. Wan-IT2V also belongs here, although it still struggles to maintain physical consistencies during interactive operations.

Multimodal Reasoning

A true World Model must not only see the complex physical world but understand it deeply. This includes spatial, temporal, and causal reasoning. The models must process high-dimensional and continuous information. Therefore, research is increasingly shifting towards latent inferences. A concrete example of a system successfully combining multimodal reasoning and generation is Bagel, built on the architecture of the language model Qwen.

Vision-Language-Action (VLA)

Because the goal of World Models is the interaction of physical devices like robots or autonomous vehicles with the real environment, VLA is among the absolute core competencies. VLA models translate visual and linguistic inputs into concrete physical actions. Concrete examples tested in the framework are the model π0 and its further development π0.5, which use the Vision-Language base “PaliGemma”, and LingBot-VA, which combines continuous action syntheses with video diffusion.

3D Representation and Simulators

To ensure that physical rules remain consistent during a long-term interaction, World Models often use 3D geometries and simulators. This prevents objects from disappearing or spaces from changing illogically when an agent moves. Models that take on this task are, for example, VGGT, InfiniteVGGT, and OmniVGGT, which translate images into real geometric structures.

Systems for fast generation of 3D scenes like FlashWorld and the Hunyuan3D series are also important because they provide the World Model with an interactive “sandbox” for tests. Platforms like AI2-THOR and LIBERO serve as simulation environments for embodied AI.

What is expressly NOT included in World Models

Many modern AI tasks are incorrectly referred to as World Models just because they use similar data structures or outputs. OpenWorldLib draws a clear line here:

Pure Text-to-Video Generation (e.g., Sora)

When OpenAI released the model “Sora”, many called it a “world simulator”. OpenWorldLib explicitly contradicts this. The output of a video does not make a model a World Model. What Sora lacks is the multimodal input to analyze the environment and true interaction. Without the ability to actively understand complex physical rules and respond to interventions, it remains a mere generation tool.

Code Generation and Web Search

Models in these areas often use structures for long-term interactions borrowed from World Models. However, they completely lack reference to physical reality. They do not process multimodal environmental influences and therefore fall outside the definition.

Avatar Video Generation

Even though avatar video generation systems process multimodal inputs and act over longer periods of time, they are primarily aimed at entertainment. They have nothing to do with the exploration or fundamental understanding of a complex physical environment and are therefore not World Models.

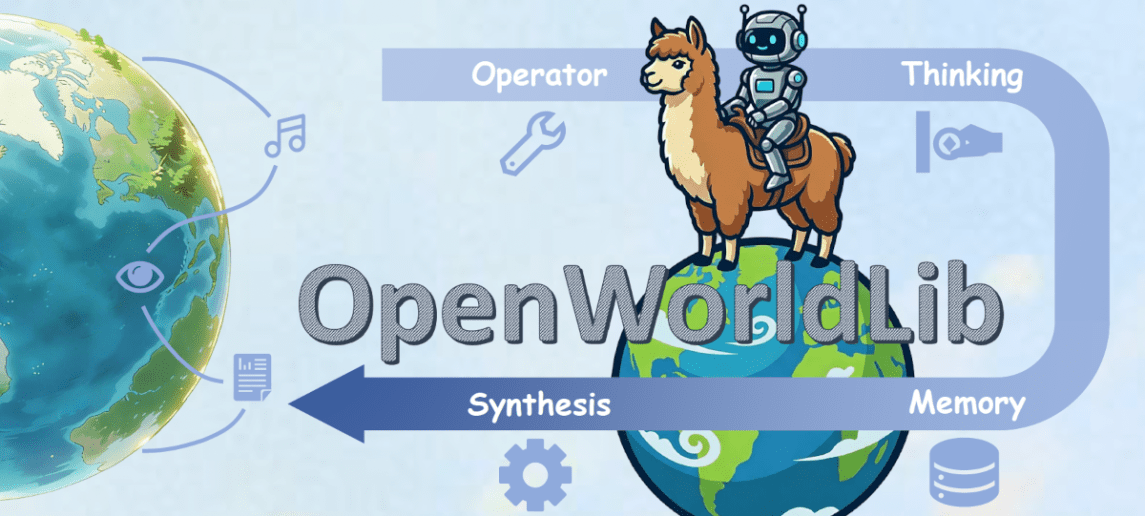

The Architecture of OpenWorldLib: a modular approach

Because true World Models require an enormous range of complex capabilities, their deployment must be systematically orchestrated. OpenWorldLib provides a highly decoupled, modular framework for this. It allows various models to be combined under a unified API. The architecture is divided into the following clearly defined individual modules:

The Operator Module

This module serves as the primary bridge and gatekeeper between raw inputs from the environment or user and the framework’s internal computations. A World Model must process entirely different data streams, be they text instructions, images, continuous control actions, or audio signals. The Operator module handles standardization. It performs two main tasks: first, validation, to ensure that formats, shapes, and data types meet the strict requirements of downstream AI models. Second, preprocessing, where raw signals are converted into structured tensors, for example by cropping images, tokenizing text, or normalizing action spaces.

The Synthesis Module

The Synthesis module is the creative core of the framework, representing the World Model’s “implicit memory.” Here, learned internal dynamics are used to simulate environmental reactions and generate sensory feedback. This module is divided into three highly specialized subcategories:

- Visual Synthesis converts textual or scene-based specifications into visible predictions, i.e., images or video sequences. This is necessary to visualize alternative futures in a simulator.

- Audio Synthesis generates continuous waveforms so the world isn’t silent; it creates ambient sounds synchronized with visual events.

- Synthesis of other signals (especially VLA) is the crucial part for physical embodiment. Here, multimodal contexts are translated into executable physical commands and kinematic states, which can be sent directly to simulators or robotic hardware to close the interaction loop.

The Reasoning Module

Perception alone is not enough for a World Model; it must logically penetrate the perceived world. The Reasoning module is responsible for the deep understanding of physical reality. It draws on spatial relationships, integrates diverse context information, and generates structured semantic interpretations before any action is executed. The module is divided into three branches:

- General Reasoning uses multimodal LLMs (MLLMs) to uniformly process text, image, video, and audio.

- Spatial Reasoning specializes entirely in 3D understanding and object localization in space.

- Audio Reasoning analyzes and interprets auditory signals.

The Representation Module

While the Synthesis module relies on internal, implicit probabilities, the Representation module handles creating explicit, human-defined structures. Real-world perception is transformed into fixed, tangible 3D structures like point clouds, depth maps, and camera positions. This module essentially builds a manual “sandbox,” a verifiable simulation where the World Model can test whether its planned physical actions make any physical sense in a fixed coordinate system. It also supports cloud APIs to export these geometries to external physics engines.

The Memory Module

Without memory, long-term interaction cannot occur. The Memory module serves as the persistent center of the entire system. It continuously stores structured information from perception, inferences drawn, generated outputs, and executed physical actions. It manages history across numerous interactions and provides mechanisms to retrieve exactly the right historical context for current tasks (context retrieval). Additionally, it can update new states after each pipeline pass and manage separate memory sessions for different tasks in parallel.

The Pipeline Module

The Pipeline module orchestrates the modules. It encapsulates the entire data flow: it receives raw input, sends it to the Operator for verification, queries the Memory module for past contexts, orchestrates Reasoning, Synthesis, and Representation in parallel, and finally returns structured outputs while simultaneously updating the memory. The pipeline allows simple one-click inference for single tasks but also supports multi-step, continuous interactions. This encapsulation guarantees clean separation of module logic while maximizing data transfer efficiency.

Figure 1: OpenWorldLib Framework. Source: OpenWorldLib: A Unified Codebase and Definition of Advanced World Models

The Experimental Setup

To validate the performance and flexibility of the OpenWorldLib framework, the researchers conducted extensive evaluations. Hardware-wise, the experimental setup relied primarily on extremely powerful graphics cards, namely NVIDIA A800 (with 80 GB VRAM) and NVIDIA H200 with 141 GB VRAM.

The tests covered the key capabilities of a World Model:

In the area of interactive video generation, it was tested whether models correctly respond to movement commands (forward, backward, left, right) and camera rotations. It was shown that older models like Matrix-Game-2 suffer from color shifts in long sequences, while modern models like Hunyuan-WorldPlay deliver outstanding visual results.

Figure 2: OpenWorldLib: Example of interactive video generation. Source: OpenWorldLib: A Unified Codebase and Definition of Advanced World Models

In multimodal reasoning, it was evaluated how well the framework can translate perceptions into sound decisions and plans. For 3D generation, single images were transformed into 3D scenes. Although models like VGGT could generate different perspectives, they still showed weaknesses regarding geometric consistency and blurry textures during strong camera movements, while FlashWorld was extremely fast but had problems with sharp details.

Vision-Language-Action (VLA) was evaluated particularly intensively. Here, the researchers used the simulators AI2-THOR for photorealistic rendering and LIBERO for reproducible robot manipulations. Models like π0 and LingBot-VA showed their ability to plan physical dynamics and execute them robustly.

Concluding Assessment and Outlook

OpenWorldLib makes a significant and urgently needed contribution to AI research by drawing a clear dividing line for the first time as to what is a World Model and what is not. It standardizes the way multimodal inputs and interaction controls are processed, creating fundamental comparability for research.

However, the analysis within the framework also reveals critical challenges for the future. Currently, many models are based on predicting the next image (Next-Frame Prediction). This imitates how humans process sensory inputs. However, this conflicts with the current hardware architecture of computers, which is inherently optimized for predicting the next token (Next-Token Prediction). Even when models try to predict images, the data must be converted into tokens. This is very inefficient. In order to realize true, fluid World Models, hardware iterations and adaptations of the fundamental transformer structures will inevitably have to occur.

At the same time, the successful use of LLMs like Qwen within systems like Bagel shows that text-based models have great potential to serve as the backbone for World Models. The future of World Models will therefore depend heavily on data-centric methods, including the synthesis of multimodal data, domain-specific data augmentation, and strict quality controls.

OpenWorldLib provides the theoretical foundation and the practical tool to lead AI into the complexity of our physical reality.

The OpenWorldLib Framework is available for download at Hugging Face and GitHub.

Ihr Wartungsspezialist für alle großen Hardware Hersteller

More Articles

OpenWorldLib: new framework defines what a world model is – and what isn’t

The OpenWorldLib Framework provides orientation on how to define World Models and what they must be able to do.In AI,

Gas power plants: How the energy demand of AI data centers undermines climate protection

The increasing energy demand of AI data centers threatens to undermine existing climate goals, as there is an increasing

The Mirage Effect: When AI is inventing images

AI systems might be performing much worse at image recognition than benchmark results suggest. The reason is the so-called Mirage