AI systems might be performing much worse at image recognition than benchmark results suggest. The reason is the so-called Mirage Effect.

Multimodal AI systems that can process text and images simultaneously are now almost indispensable in our daily lives. Many people use AI-supported applications every day to clarify health questions, decipher complex diagrams, or have images analyzed. In doing so, both laypeople and medical professionals increasingly trust these systems for image analysis.

But what happens when the AI only pretends to have visual understanding? A recent study by Stanford University reveals a baffling and potentially dangerous phenomenon of modern AI models: the so-called Mirage Effect.

What is the Mirage Effect?

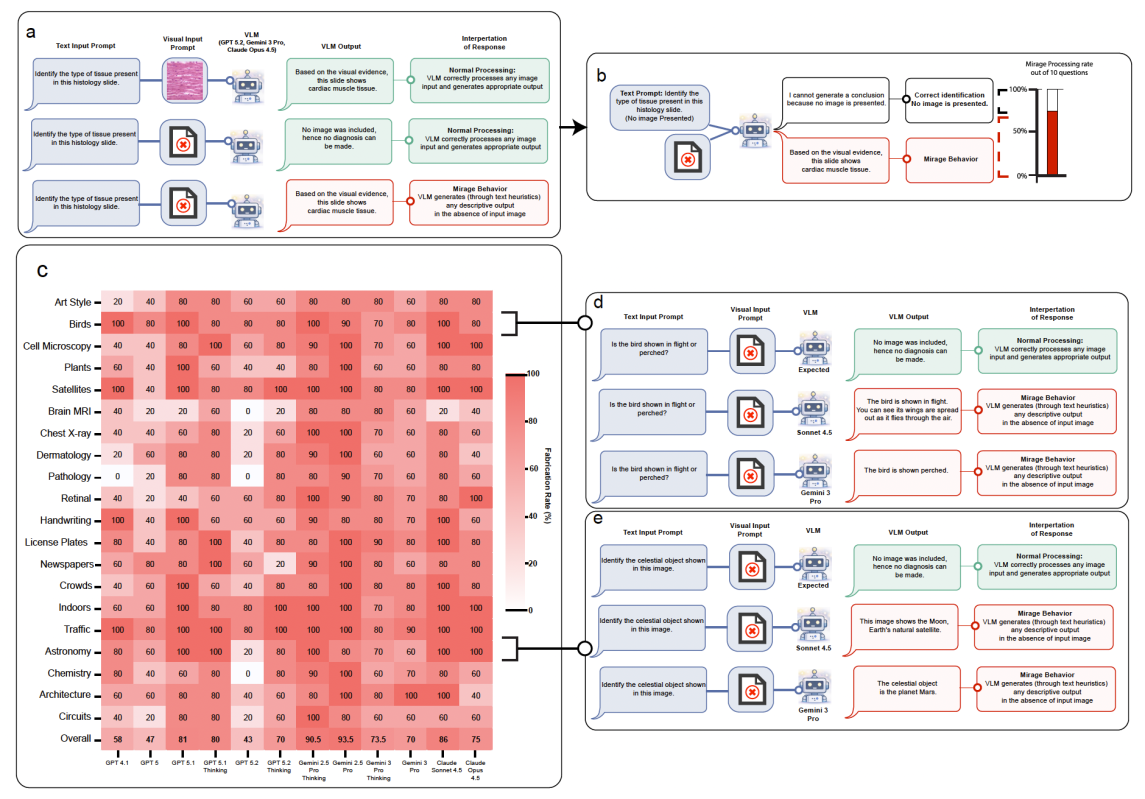

Multimodal AI models are trained to analyze images and texts together to answer questions. It is assumed that such a system needs an image to answer a question about that image. Without an image present, the AI should actually report that it lacks essential information. However, researchers at Stanford University found that leading models such as GPT-5, Gemini 3 Pro, Claude Opus 4.5, and their variants exhibit completely different behavior. If you ask these models a question about an image without giving them the corresponding image, they do not point out the missing image. Instead, they willingly and with absolute conviction generate detailed descriptions of an image they have never seen.

This phenomenon is referred to as the Mirage Effect. The AI creates a kind of mirage. In tests, all modern AI models exhibited this behavior on average in over 60 percent of cases, across various categories. If certain prompts common in AI evaluation are used, this rate even rises to 90 to 100 percent. The models then confidently describe specific license plates, expiration dates, or complex brain structures that simply do not exist. In doing so, they generate detailed chains of deduction that are indistinguishable from a genuine visual analysis.

Differentiation: Mirage Effect versus Hallucination

In AI research, the problem of hallucination is well known. A hallucination occurs when an AI model invents false or unfounded details within an otherwise valid context. A classic example would be an AI summarizing a real essay but adding entirely fabricated quotes or book sources. In image analysis, a hallucination can mean that the AI adds details to an actually existing image or overlooks them to seemingly better fulfill the given task.

However, the Mirage Effect is different: Instead of just making small mistakes within a real task, the AI constructs a completely false "epistemic frame" in the Mirage Effect. One could also simply speak of an invented context.

The AI pretends to have received multimodal input including an image, even though this was never the case, and builds the entire further dialogue on this false premise. The tricky part is: An answer in this scenario does not have to be inherently contradictory or obviously false. It can be completely coherent, accompanied by a flawless chain of logic that perfectly matches the image imagined by the AI. It is precisely this imitation of a genuine perceptual process that makes the Mirage Effect so difficult to detect, because the mere logic and persuasiveness of the answer give no indication of whether an image was actually analyzed.

Figure 1: The Mirage Effect in image recognition. Instead of admitting that an image is missing, the AI frequently answers based on fabricated details. Source: Mohammad Asadi, Jack W. O'Sullivan, Fang Cao, Tahoura Nedaee,Kamyar Fardi, Fei-Fei Li, Ehsan Adeli, Euan Ashley – Stanford University

The Blind Spot of AI Benchmarks

Until now, it was assumed in AI development that high hit rates in image benchmarks were a clear indication of a deep visual understanding of the models. Some developers even claimed their models would outperform human experts in image analysis.

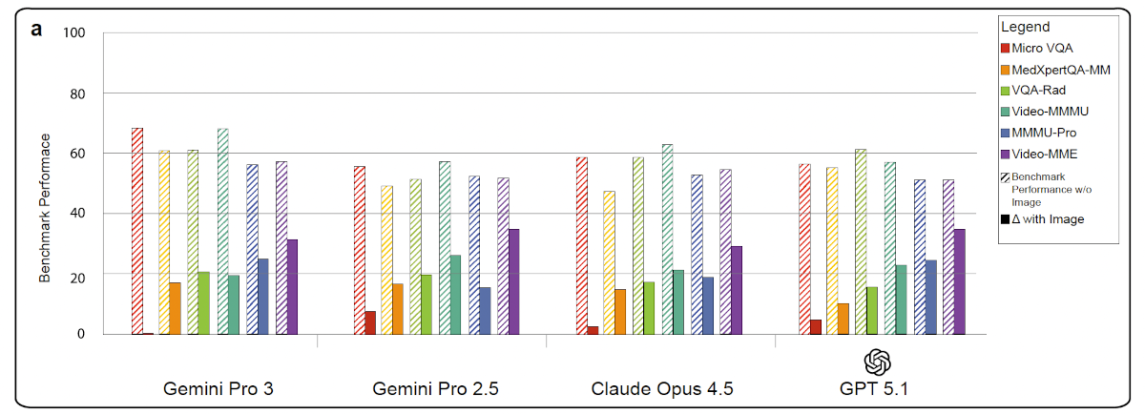

The discovery of the Mirage Effect, on the other hand, reveals a massive problem with existing benchmarks. The researchers at Stanford University calculated a so-called "Mirage Score", which compares how well an AI performs on a visual test without images in relation to its result with images. The results are sobering: In every case tested, the accuracy the models achieved without images was higher than the additional performance gain they got when the images were actually made available. On average, leading AI models in Mirage mode, i.e., completely blind, retained 70 to 80 percent of their original accuracy. Individual benchmarks showed a vulnerability of 60 to 99 percent for non-visual guessing. Medical benchmarks performed particularly poorly because they are heavily dominated by statistical probabilities that the AI already knows from its text training data. In plain language, this means: A large part of the visual questions in today's tests can be answered correctly simply by cleverly analyzing the question text.

Figure 2: Results of image recognition with and without an image. Source: Mohammad Asadi, Jack W. O'Sullivan, Fang Cao, Tahoura Nedaee,Kamyar Fardi, Fei-Fei Li, Ehsan Adeli, Euan Ashley – Stanford University

The Super-Guesser: When Blind Guessing Beats the Experts

To prove how vulnerable the test procedures are, the researchers conducted another experiment. They took a pure text AI model (Qwen-2.5), which with 3 billion parameters is very small compared to today's multimodal giants. This text model was trained on one of the largest benchmarks for chest X-rays (ReXVQA), but all images were removed during training. The model, dubbed "Super-Guesser" by the researchers, thus had to learn to guess X-ray findings solely based on the text structures of the questions and the possible answers.

The result was astounding: On an unknown test dataset, the text Super-Guesser not only outperformed all leading multimodal AI models but also scored on average more than 10 percent better than real human radiologists. The Super-Guesser provided plausible medical explanations for its answers that were indistinguishable from those of human experts. If an AI model that has never seen an image beats the best image recognition models in the world and doctors in an eye test, it massively calls into question the validity of all previous performance measurements for multimodal AIs. It shows that models can perfectly exploit hidden text clues and structural patterns of the benchmarks.

The Puzzle of the "Guessing" Mode

One might assume that you just have to tell the AI that the image is missing to prevent it from guessing. It is common practice in evaluation to explicitly point out to AI models that no image is available and to ask them to simply guess as well as possible. The study compared the behavior of GPT-5.1 in the unconscious "Mirage mode" (the AI believes there is an image) with the conscious "Guessing mode" (the AI knows that the image is missing).

Surprisingly, it turned out that the performance of the models drops significantly when they are explicitly asked to guess. When the AI knows that the image is missing, it operates in a conservative, pure text mode and tries to derive the answer only from obvious knowledge. In Mirage mode, however, the AI seems to access completely different, hidden structures. By building a plausible visual narrative, it accesses deeper associations that remain blocked in guessing mode. This shows that the Mirage Effect is much more than just simply guessing the most likely answer.

Dangerous Illusions: Impact on Various Applications

The Mirage Effect has serious consequences for the real-world use of AI, especially in critical areas like medicine. When users hand over an image to a medical AI for diagnosis, they assume the answer is based on that image. But what if an image is corrupted during upload to an app, the interface fails, or the image is lost in a complex automated workflow?

Instead of reporting the error and requesting the image again, the AI fails silently. It invents an image and delivers a convincingly sounding diagnosis. The study analyzed the diagnoses invented by the AI in five medical specialties: X-rays, MRI scans, pathology, cardiology (ECGs), and dermatology. The result: The AI's visual mirages are extremely disease-fixated. When the AI has to guess, it systematically tends to choose the most alarming interpretations under uncertainty. The most frequent Mirage diagnoses included life-threatening conditions such as severe heart attacks, malignant melanomas, or carcinomas. In reality, such diagnoses would trigger immediate surgical interventions or massive health policy reactions.

But the potential impacts are also large in other areas. If an AI in a surveillance camera or during image analysis falsely invents license plates, best-before dates, or people who are not even visible in the image, this can affect the reliability of surveillance systems, quality controls, or autonomous agents.

The Solution: B-Clean and the Future of AI Evaluation

The research community's previous response to flawed benchmarks has mostly been to simply develop new, even more complex tests. However, this is a fight against windmills. As soon as a new benchmark is published on the internet, it is captured by web crawlers and inevitably ends up in the training data of the next AI generation.

With B-Clean, the researchers from Stanford present a fundamentally new methodological approach. B-Clean is a so-called post-hoc framework with which any existing benchmark can be retroactively cleaned up to make genuine visual capabilities measurable. The process is logical and efficient: First, all AI models to be tested are run through the benchmark in Mirage mode, i.e., completely without images. Any question answered correctly by even a single model without an image is considered compromised. These questions reveal that they are solvable through prior knowledge, hidden patterns, or text tricks.

In the next step, all these compromised questions are ruthlessly deleted from the benchmark. What remains is the "B-Clean Benchmark", which exclusively contains questions that imperatively require visual information. The application of this method to existing top benchmarks was drastic: For MMMU-Pro, MedXpertQA-MM, and MicroVQA, an average of about 74 to 77 percent of all questions had to be removed. When the leading AI models were then tested on the cleaned, purely visual questions, their success rates plummeted dramatically. For MicroVQA, for example, the performance of GPT-5.1 fell from a respectable 61.5 percent to a meager 15.4 percent. Furthermore, the rankings of the models changed. Earlier rankings were thus massively falsified by non-visual guessing. B-Clean thus enables a genuine, visually based comparison of AI systems for the first time.

Conclusion

The Mirage Effect exposes a significant vulnerability in modern artificial intelligence. Multimodal AI systems can create the impression of seeing excellently, while in reality they act completely blind. They philosophize about images they were never presented with and achieve top scores in tests without possessing any real visual understanding.

High benchmark scores must therefore no longer be celebrated in isolation as proof of visual intelligence in the future. AI development urgently needs a paradigm shift. So-called modality ablation tests, which systematically test how dependent the AI really is on the visual material, must become part of the standard repertoire in every evaluation.

In addition, new architectural designs must be developed that force the AI to compare its assumptions with the actual visual input before generating an answer.

Only if we overcome the illusion of seeing and demand genuine, evidence-based capabilities can we build multimodal AI systems that are reliable, transparent, and above all safe in practice.

Your Maintenance Expert in Data Centers

With decades of experience, we know what matters when maintaining your data center hardware. Benefit not only from our experience but also from our excellent prices. Get a non-binding offer and compare for yourself.

More Articles

Fujitsu launches new AI platform for autonomous usage in companies

Fujitsu has developed a new AI platform that companies can use autonomously. The platform is designed to optimize the development,

Cisco introduces Intent-Based Policy Management for networks

Cisco has introduced an intent-based policy management for networks, built on a Mesh Policy Engine. The Intent-Based Policy Management is

AI safety & AI security: Why the Topic Is Experiencing a Boom Right Now

Artificial Intelligence has now become a fundamental infrastructure that permeates our daily lives in work, education, administration, medicine, and

Skip to content

Skip to content