MIT has presented a new approach that can measure the uncertainty of LLMs more reliably.

AI language models like ChatGPT often appear with great conviction, even when they are spouting complete nonsense. This phenomenon of hallucinations is a major problem if we want to rely on their answers. Previous methods to measure an AI's uncertainty often fail precisely because of this exaggerated "self-confidence" of the LLMs. Researchers at MIT have now developed a new approach that fixes exactly this weakness by subjecting models to a reality check with other AIs.

To understand how this works, we need to distinguish between two basic types of uncertainty: aleatoric and epistemic uncertainty.

The Problem with Blind Self-Confidence: Aleatoric Uncertainty

Previous approaches to detecting errors in AIs mostly focused on so-called aleatoric uncertainty (AU). This basically measures the internal uncertainty or erraticness of a model. The term "Alea" comes from Latin and means "dice," but can also generally refer to a game of chance.

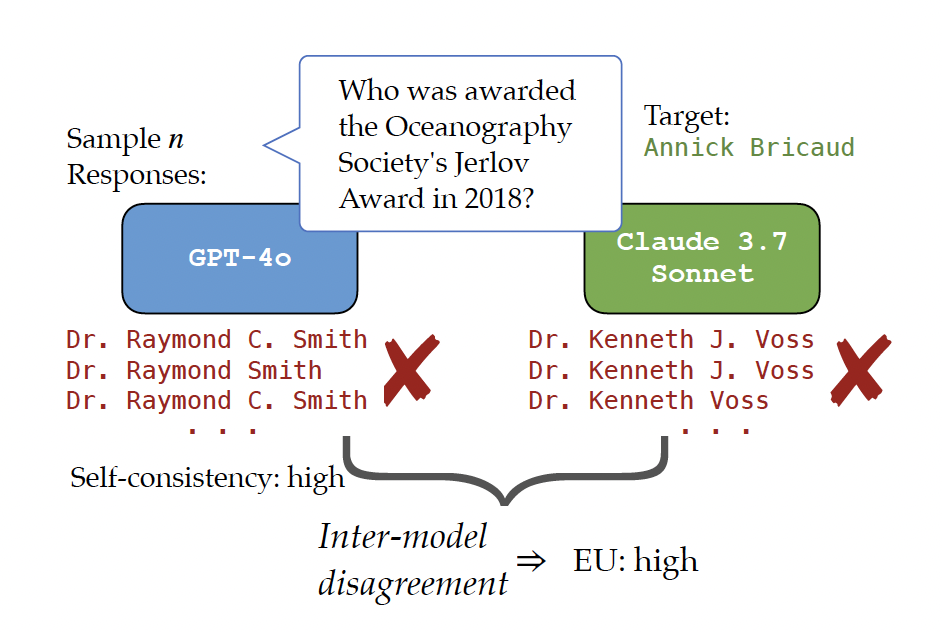

In practice, measuring aleatoric uncertainty works via self-consistency: you simply ask the AI the same question multiple times. If the model generates a completely different answer each time, its aleatoric uncertainty is high. The model "doubts." But if it repeatedly gives the same answer, it is considered certain.

The big problem with this: A language model can be extremely confident but still completely wrong. If the AI stubbornly invents the same wrong fact over and over again, i.e., hallucinates, the aleatoric uncertainty drops to zero and the system does not sound an alarm.

Looking Outside the Box: Epistemic Uncertainty

To eliminate this blind spot, the new approach brings epistemic uncertainty (EU) into play. While aleatoric uncertainty asks: "How certain is the model in its prediction?", epistemic uncertainty asks: "How much should WE trust this specific model at all?". The EU measures the uncertainty that arises because we might not be using the optimal model for this specific question.

Because there is no one perfect model, the MIT researchers use a clever trick: They survey a small group of other, similarly powerful language models developed by different creators.

If the original model is rock-solid convinced of a wrong answer, the other models are highly likely to give completely different answers. This content deviation, i.e., the semantic discrepancy between the models, reveals the epistemic uncertainty.

A major advantage of this method is that it works purely via the generated text answers. It is therefore a black-box method that does not require direct access to the complex, internal programming of the models.

Figure 1: High epistemic uncertainty despite high self-consistency. Source: MIT

Because there is no one perfect model, the MIT researchers use a clever trick: They survey a small group of other, similarly powerful language models developed by different creators.

If the original model is rock-solid convinced of a wrong answer, the other models are highly likely to give completely different answers. This content deviation, i.e., the semantic discrepancy between the models, reveals the epistemic uncertainty.

A major advantage of this method is that it works purely via the generated text answers. It is therefore a black-box method that does not require direct access to the complex, internal programming of the models.

The Solution: Total Uncertainty

The combination of both values ultimately yields the Total Uncertainty (TU = AU + EU). This simple addition creates a significantly more robust measuring instrument: The model is checked both for internal contradictions (aleatoric) and for external disagreements with other AIs (epistemic).

Extensive tests show that Total Uncertainty is vastly superior to conventional methods when it comes to distinguishing confident but wrong answers from real facts.

Practical Applications

The new approach offers major advantages for the real-world deployment of AI systems:

- Deployment in high-risk areas: In fields such as medicine, legal advice, or finance, AI errors can have fatal consequences. Reliable uncertainty estimates are an absolute prerequisite here to be able to use language models safely in the first place.

- Intelligent silence (selective prediction): If we know the total uncertainty of a model, we can set thresholds. If the uncertainty is too high, the AI simply refuses to answer instead of guessing wildly. Tests prove that the AI's error rate can be drastically reduced through this selective answering.

- Fact-checking and translations: The method works particularly well for tasks where there is only one clearly correct answer, such as specific knowledge questions or translations. Here, the lack of agreement between different models reveals factual errors with particular accuracy.

Conclusion and Assessment

We no longer have to rely on the mere self-confidence of a single AI. By sending language models into a kind of "panel discussion" with their AI colleagues, we can judge much better whether we can trust an answer or not.

Your Maintenance Expert in Data Centers

With decades of experience, we know what matters when maintaining your data center hardware. Benefit not only from our experience but also from our excellent prices. Get a non-binding offer and compare for yourself.

More Articles

BW Velora: New Company to Build Sustainable Data Centers

BW Velora is a new player in the data center infrastructure market. Founded in the Norwegian capital of Oslo,

Dell: High Demand for AI Servers Leads to Strong Sales Figures

Dell is currently experiencing a sharp increase in demand for servers, driven particularly by the growing needs in the

HPE Introduces New Nonstop Compute Servers

Hewlett Packard Enterprise (HPE) has expanded its range of Nonstop Compute servers with the introduction of the HPE Nonstop

Skip to content

Skip to content