The increasing energy demand of AI data centers threatens to undermine existing climate goals, as there is an increasing reliance on gas as an energy source. Are there still potential solutions?

AI Race – Is Humanity Running to Its Doom?

Large language models like GPT, Gemini, or Llama are becoming larger, more powerful, and more power-hungry at ever shorter intervals. What is hardly discussed publicly: To satisfy this hunger, technology companies are increasingly relying on natural gas power plants, which they build right next to their data centers.

Researchers are already speaking of a "Fossil AI Alliance": a structural entanglement between the technology industry and the fossil energy industry that threatens to undo progress in climate protection. When billions flow into natural gas pipelines and turbines today, dependencies are created that will last for decades. After all, a gas power plant that goes online in 2026 will hardly be shut down again in 2030.

The pace of development is enormous. The urge to train models with more and more parameters has triggered an infrastructure boom whose dimensions are reminiscent of railway construction and the industrialization of the 19th century. But there is a crucial difference: Electrification can now be switched to renewable sources. The AI infrastructure, on the other hand, is based on a paradoxical logic: For the "intelligence" of the future, it burdens the atmosphere with the emissions of the past.

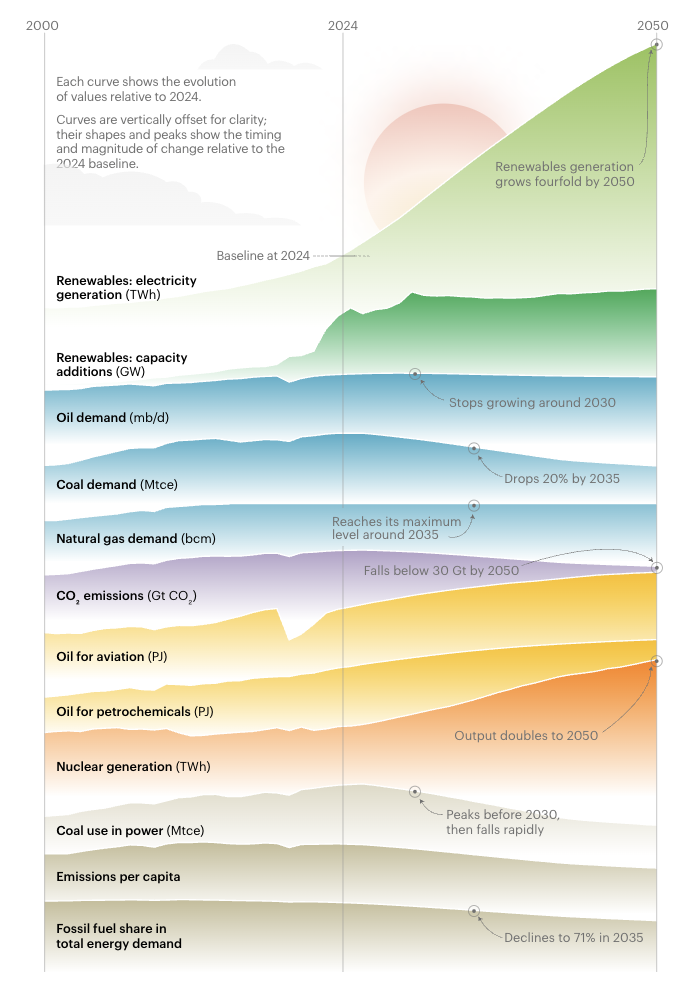

A forecast of global energy demand by the International Energy Agency (IEA) shows that natural gas demand will peak around the year 2035. However, the question is whether this scenario still holds true given the rapidly expanding data centers:

Figure 1: Forecast of global energy demand. Source: IEA

Can a planet with limited resources cope with exponential growth in digital computing power, primarily fueled by burning hydrocarbons?

Current Case Studies: The Return of Natural Gas

In recent months, several major projects have become known that show how far the technology industry has already strayed from its green promises. A common pattern: Instead of waiting for the public power grid, the corporations are building their own power plants right behind the data center fence.

The Microsoft-Chevron Alliance in Texas

At the end of March 2026, a deal became public that made people sit up and take notice: Microsoft, the oil company Chevron, and the investment fund Engine No. 1 signed an exclusive agreement for power supply. A natural gas power plant with a capacity of 2,500 megawatts is to be built in West Texas. Estimated costs: around seven billion US dollars. The power plant will serve exclusively to operate a huge campus of data centers running AI services like ChatGPT and Copilot. Chevron supplies the fuel, GE Vernova the turbines, Microsoft the purchase guarantee. One could say: The future of artificial intelligence is fueled by natural gas.

Google's "Goodnight" Campus and Hidden Emissions

Google is also secretly relying on gas. A report by Wired documents how a Google-funded data center project in Armstrong County, Texas, undermines the company's carbon footprint. The so-called Goodnight campus is to be partially powered by private gas turbines. According to permitting documents, these turbines could emit more than 4.5 million tons of greenhouse gases annually. That is roughly equivalent to the annual CO₂ emissions of one million gasoline-powered cars and exceeds the emissions of an average coal-fired power plant.

Meta and Infrastructure Expansion in Louisiana

In the US state of Louisiana, Meta and the energy provider Entergy Louisiana have signed a 2 billion US dollar agreement. A hyperscale data center is planned in Richland Parish, which will be supplied by seven to ten new gas power plant units with a total capacity of up to 7.5 gigawatts. The agreement is marketed as a benefit to local electricity customers and is purportedly expected to bring $2.65 billion in savings over 20 years. However, the capacity increase corresponds to an addition of over 30 percent for Louisiana's entire power grid and will be primarily met by fossil fuels.

The following table shows some planned major projects for data centers:

| Project / Alliance | Region | Capacity | Primary energy source | Cost / savings |

| Microsoft / Chevron / Engine No. 1 | West-Texas, USA | 2.500 MW | Natural gas (GE Vernova turbines) | $7 bn (Invest) |

| Google „Goodnight“-Campus | Texas, USA | k.A. (high GW range) | Natural gas (turbines) | 4,5 mio t CO₂/year |

| Meta / Entergy Louisiana | Richland Parish, USA | 5.000–7.500 MW | Natural gas, solar, nuclear power | $2,65 bn (customer savings) |

| Pure Data Centre Group | Dublin, Ireland | 110 MW | Natural gas engines (Microgrid) | €1 bn (whole project) |

Table 1: Selected major projects for data centers. Data sources: Economic Times, National Today, ENR, Entergy, 2026

The Role of Large Models and Training Processes

Where does this enormous hunger for electricity come from in the first place? The answer lies in the architecture of modern AI systems. Large language models follow so-called scaling laws: More data and more computing power almost inevitably lead to better results.

Training a language model like GPT-3 consumed an estimated 1,287 megawatt-hours (MWh). That corresponded to around 552 tons of CO₂ emissions. Newer models like Meta's Llama variants already reach over 21,500 MWh. A single training run for a top model in 2026 requires a constant load of about 25 megawatts over several months. Because companies are training and fine-tuning dozens of these models simultaneously, the demand in individual data centers adds up to gigawatt levels. A single AI data center thus consumes as much electricity as 100,000 households.

Inference: The Invisible Continuous Load

While training makes the headlines, so-called inference, i.e., daily operation when millions of users send queries to chatbots or use image generators, accounts for around 80 to 90 percent of total energy consumption. A single ChatGPT query requires about 2.9 watt-hours; a conventional Google search gets by with 0.3 watt-hours. The factor is thus almost ten. With billions of daily interactions worldwide, this creates a base load that puts power grids under permanent pressure.

Why Gas Power Plants of All Things?

The decision in favor of natural gas does not follow any ideology but a hard economic logic related to the physical properties of the power grid. Four reasons stand out:

Base Load Capability: Solar and wind energy are weather-dependent and therefore fluctuating. Gas power plants, on the other hand, deliver stable power around the clock. Data centers need 99.999 percent availability. Without huge and expensive battery storage, renewables cannot achieve this alone.

Speed: Connecting a data center to the public power grid takes four years or longer in hotspots like Northern Virginia or Dublin. A private gas power plant on site is up and running in a fraction of this time.

Grid Bottlenecks: Transmission networks are at their limit in many regions. Private gas turbines relieve the public grid and make companies independent of grid outages.

Flexibility: Modern gas engines can be ramped up within 60 seconds. This fits perfectly with the fluctuating loads of AI calculations.

Visible Impacts and Regional Inventory

The AI boom has already measurably changed the global energy landscape. The International Energy Agency (IEA) reports a return of demand growth in industrialized nations after 15 years of stagnation.

North America: The Engine of Expansion

The USA is home to almost half of all data centers worldwide. According to Global Energy Monitor, gas power projects with a total capacity of 252 gigawatts are under development. That would be an increase of almost 50 percent compared to the current level. Texas tops the list: 80.6 GW of planned gas power plants, of which 40 GW are exclusively for data centers.

Europe: Regulation Meets Reality

In Ireland, data centers already consume over a fifth of the electricity. New rules dictate that every new building must bring its own controllable generation – and in practice, that means: gas. Dublin is today the scene of the first microgrid-operated data centers, which work completely independently of the national power grid. In Germany, the Energy Efficiency Act (EnEfG) focuses on waste heat utilization, but the dependence on the general electricity mix, which still contains gas, remains.

Asia and Southeast Asia: The New Growth Market

Southeast Asia is recording the highest growth rates. The region is home to over 2,000 data centers; Malaysia alone has a project pipeline of 3.4 gigawatts. In countries like Indonesia and Vietnam, digital expansion is being massively driven by fossil fuels because these are available cheaply and offer the necessary supply stability.

| Region | Capacity growth until 2030 | Status gas expansion |

| USA | +100 GW (whole market) | leading; massive behind-the-meter trend |

| UK | not specified | moderate; focus on grid extension |

| Germany | not specified | strictly regulated by EnEfG |

| China | massive | second-biggest gas expansion worldwide |

| Malaysia | 3,4 GW (projects) | Fastest growth in southeast Asia |

Table 2: Data centers and gas expansion in global regions

Forecasts on Climate Impacts

The ecological forecasts for the period up to 2030 are sobering. For years, the industry was able to keep power consumption stable through efficiency gains. But the AI era has ended this phase.

Emissions: The AI sector could emit an additional 200 million tons of CO₂ annually by 2030. According to a study by Cornell University, computing power for AI alone is expected to contribute up to 44 million tons of CO₂ per year to the atmosphere.

Electricity Share: Data centers could claim around 12 percent of total US electricity consumption by 2030. Globally, experts expect a doubling to over 945 terawatt-hours: as much as the entire electricity demand of Japan.

Methane Risk: The increased use of natural gas brings with it the risk of methane leaks. Because methane is a significantly more potent greenhouse gas than CO₂, the actual climate effect of the AI infrastructure could be higher than official reports suggest.

Companies and Their Contribution to CO₂ Emissions

The gap between the sustainability promises of technology companies and physical reality is widening. Reports indicate that the actual emissions of the "Big Four", namely Google, Microsoft, Meta, and Amazon, exceed officially reported values by up to seven times when considering indirect emissions without certificate calculation.

The Hyperscalers' Backtracking

Google, which originally set out with the goal of operating completely carbon-free by 2030, now internally refers to this goal as a "moonshot". Microsoft describes the path to climate neutrality as a "marathon" after emissions have risen massively due to the construction of new data centers.

The following table shows the growing gap between aspiration and reality in climate protection:

| Company | Emissions growth (about 2020–2024) | Status of climate goals | Main reason for growth |

| +48–50 % | „Moonshot“ until 2030 at risk | AI infrastructure and data centers | |

| Microsoft | +23–30 % | Carbon negative until 2030 | Building new data centers (Scope 3) |

| Meta | +60–66 % | net zero until 2030 | Massive expansion of AI capacity |

| Amazon | +182 % (indirect) | net zero until 2040 | Logistics and expansion of AWS cloud |

Table 3: Hyperscalers and examples of planned data centers

Investors are increasingly demanding transparency and doubting the effectiveness of Renewable Energy Certificates (RECs) as long as gas power plants are physically being built in regions with a fossil grid mix at the same time. The credibility of ESG reporting is at stake because the accounting often suggests progress that does not actually take place in physical reality.

Dependence on Gas in Times of Geopolitical Tensions

The current war of aggression by the USA and Israel against Iran shows how vulnerable global energy supply is. Due to the de facto blockade of the Strait of Hormuz by Iran, only a fraction of the previous amount of oil and gas can now be delivered via this route.

If the demand for fossil energy rises at the same time due to the expansion of data centers, this acts as an additional price driver. Although the USA, for example, has large gas reserves itself; however, if these flow into the operation of data centers, they are no longer available to the world market and have a price-increasing effect.

Are Renewable Energies a Solution?

Technology companies are the world's largest buyers of solar and wind power. But the sheer volume of electricity required exceeds the available supply in many markets.

The Problem of Simultaneity

Renewable energies are subject to the weather. Data centers, however, need a constant base load, day and night. When the sun is not shining and the wind is not blowing, the electricity must come from storage – or precisely from gas power plants. Although there is progress with long-term storage such as iron-air batteries, these are still in the pilot stage. Another approach is the direct location of solar and wind parks at the data center. However, this requires enormous areas of land that are hardly available near metropolitan areas.

The Role of Nuclear Energy

Nuclear energy is experiencing an unexpected renaissance due to the AI boom. For data center operators, it combines two crucial characteristics: It can be sold as CO₂-free and provides base load.

Revival of Old Reactors: Microsoft has signed an exclusive contract to restart Unit 1 at Three Mile Island. Amazon is pursuing similar plans for the Calvert Cliffs power plant.

Small Modular Reactors (SMRs): SMRs could theoretically be installed directly on the site of a data center. Meta is investing in partnerships with TerraPower and Oklo to secure up to 6.6 GW of nuclear capacity. Currently, however, there are no productive SMRs with the exception of China and Russia. Significant capacities from SMRs will not be available until the mid-2030s at the earliest, according to expert estimates.

The disposal of nuclear waste remains unresolved. In Germany, for example, there is still no final repository. A search for a site is currently underway. Until one is found and operational, it will very likely be more than 50 years.

Not to be forgotten is the problematic mining of uranium as fuel for the reactors. It is associated with major environmental damage. Mining and transport cause large amounts of CO2.

With uranium, states also make themselves dependent on autocratic regimes. Russia, for example, continues to supply 20 to 30 percent of the uranium needed in the EU – despite existing sanctions. Another globally important supplier of uranium is Kazakhstan.

Political and Regulatory Measures

Politicians have recognized the problem of increasing energy consumption by data centers and are reacting with a mix of transparency obligations and new market rules.

European Union: The EED Directive

With the recast of the Energy Efficiency Directive (EED), the EU has introduced far-reaching reporting obligations for data centers from 500 kW. Since May 2025, operators must annually report data on energy consumption, water footprint, and waste heat utilization to a central European database. In the long term, an EU-wide rating system with minimum performance standards is to follow.

USA: FERC and the "Lightning Amendments"

In the US, the regulatory authority FERC is focusing on distributing the costs of grid expansion fairly. In Louisiana, a so-called "Lightning Amendment" was passed, which allows for accelerated approvals as long as the companies bear the full costs of the new infrastructure and residential customers are not burdened.

Can AI Contribute to Climate Protection?

Despite the high consumption, there are reasons for cautious optimism. AI can also be a tool for decarbonization. The net balance could be positive if the efficiency gains exceed the emissions.

Optimization of Renewable Energies: Google DeepMind uses AI to predict the power generation of wind farms 36 hours in advance. This has increased the economic value of these wind farms by 20 percent.

Smart Grids: AI-supported systems can balance supply and demand in the power grid in real-time. This facilitates the integration of millions of small solar installations and electric cars.

Scientific Discoveries: AI is accelerating research into new battery materials and highly efficient solar cells.

Efficiency in the Data Center: Google DeepMind was able to reduce the cooling energy requirement of Google's own data centers by 40 percent through AI control.

| AI application | climate benefit | Mode of action |

| Wind energy projections | +20 % economic value | Reduction of fossile backups by more accurate projections |

| Facility management | 15–22 % energy savings | Deep-learning optimization of heating and cooling |

| Weather modeling (GraphCast) | Accelerated pre-warnings | More precise disaster relief and agricultural planning |

| Smart Grid Management | CO₂ reduction up to 15 % | Intelligent load distribution, avoidance of peak load plants |

Table 4: How AI can reduce energy consumption. Data sources: WEF, Harvard SEAS, Unified AI Hub, 2026

Conclusion: Is Climate Protection Done For Because of the AI Boom?

Analysis of the current situation leads to an ambivalent result. Technological progress has outpaced ecological protective mechanisms. The return of gas power plants, driven by the hunger for AI computing power, endangers the achievement of the 1.5-degree target, which already seems hardly achievable anyway.

At the same time, AI itself offers the most powerful tools ever available to manage complex systems such as the global climate and energy supply. The decisive factor will be time: Will we succeed in deploying AI so quickly that it generates efficiency gains in the global economy that overcompensate for its own footprint?

Currently, natural gas serves as the seemingly necessary but climate-damaging lubricant of a revolution that has not yet even completed its own sustainable foundation. The fate of climate goals depends on whether gas power plants remain a short-term bridge or whether they, due to the sheer volume of AI investments, become a permanent dead end.

Climate protection is by no means "done for" because of the AI boom. But it has become more difficult, more expensive, and more dependent on technological discipline than ever before. The challenge for 2026 and beyond: to regulate the AI race in such a way that AI development does not undermine the planet's ability to survive.

Your Maintenance Expert in Data Centers

With decades of experience, we know what matters when maintaining your data center hardware. Benefit not only from our experience but also from our excellent prices. Get a non-binding offer and compare for yourself.

More Articles

Cooperation: Snowflake integrates OpenAI LLMs

Data cloud provider Snowflake and OpenAI have agreed on a collaboration worth 200 million US dollars. The core of this

Fujitsu launches new AI platform for autonomous usage in companies

Fujitsu has developed a new AI platform that companies can use autonomously. The platform is designed to optimize the development,

Cisco introduces Intent-Based Policy Management for networks

Cisco has introduced an intent-based policy management for networks, built on a Mesh Policy Engine. The Intent-Based Policy Management is

Skip to content

Skip to content