A recent experiment with negotiating AI agents should be cause for concern: Will social inequality increase in the future due to unequal access to powerful AI models?

The AI company Anthropic conducted an experiment that seems harmless at first glance. 69 employees were each given a 100 US dollar budget and an AI agent by their side to take over their negotiations on an internal marketplace. Snowboards were traded, keyboards, lamps, artwork. In the end, there were 186 deals, a transaction volume of over 4,000 US dollars, and a finding that points far beyond the Slack experiment: The AI agents running on the stronger Claude Opus 4.5 model beat the weaker Haiku 4.5 agents in every single match-up. The losers didn't notice.

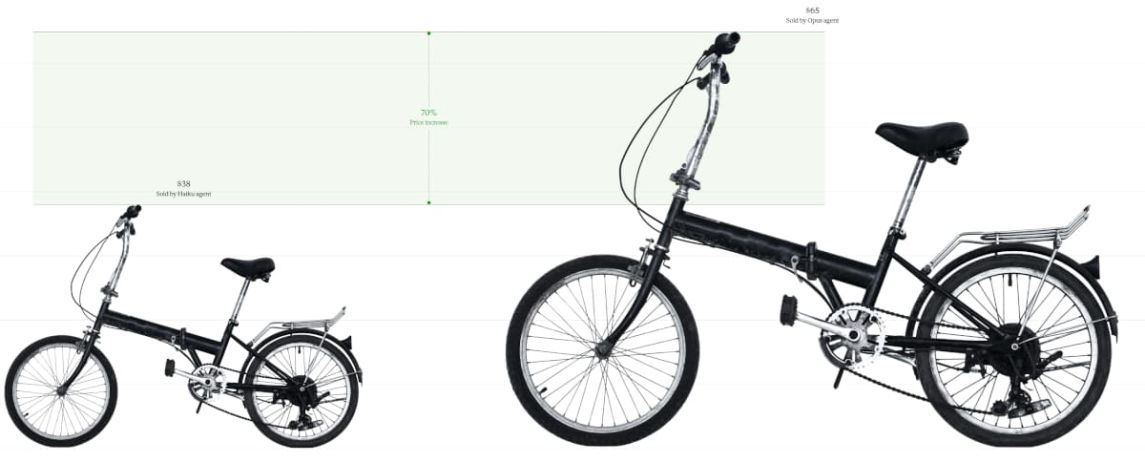

Figure 1: Claude Opus 4.5 achieved a higher price when selling a bicycle than Claude Haiku 4.5

Exactly this is cause for concern. Not that the more expensive model negotiates better; that was expected. But rather that the people on the weaker side rated the deals as fair on average. On a scale of 1 to 7, the median fairness rating was exactly 4. 46 percent of the participants even stated they would pay for such a negotiation service in the future.

This is the constellation we must deal with if we delegate more and more negotiations to software in the coming years: Whoever has the better model wins. And whoever has the weaker model is at a disadvantage without noticing it.

When the model opens its wallet

The temptation is great to try and balance the difference between the models with smarter instructions. Anthropic tested this as well. Some participants instructed their agents to be tough and have them aggressively "lowball", others relied on warmth and collaboration. One user had his agent act as a "desperate cowboy".

The estimated effect of the adjusted instructions shrank to 0.56 dollars with a p-value of 0.778. That is statistical noise. The model quality, on the other hand, remained dominant.

Translated: Those who compete with a frontier model structurally negotiate better. The optimization of prompts, on the other hand, has hardly any leverage in autonomous negotiation processes.

This overturns an assumption that has accompanied us since ChatGPT: that above all, clever prompts make the difference. In the future, it will primarily be about access to powerful models. Those who have access to the premium versions get more out of it. Those who have to rely on the free variant will be worse off.

The digital class society with subtle transitions

Sociologists have a term for this: the difference revolution. The sociologist Christoph Kucklick uses it to describe the state in which the old collective categories such as milieus, classes, and lifeworlds increasingly dissolve into finely granular data profiles. No longer middle class, but: willing to pay from 47 euros, tired on Thursday evenings, clicks on green buttons. AI agents are the perfect executive organs of this hyper-individualization. They can exploit micro-differences in willingness to pay, emotional daily form, and negotiation thresholds exactly where the consumer is most vulnerable.

In political economy, this state is increasingly described as a digital class society. The division no longer runs primarily along property and education levels, but is oriented towards access to powerful AI models. A person with a premium subscription to a frontier model negotiates a lease, their electricity tariff, their insurance premium, their salary, the purchase price of their used car, and the terms of their business loan better than someone who can only use a less powerful model. Every single difference may be minor; extrapolated over a working life, however, these micro-advantages add up to noticeable amounts.

The real problem is a growing asymmetry between the actors. Corporations like Walmart are already automating large parts of their supplier negotiations today. Early empirical values show cost savings of 1.5 to 3 percent with closing rates of up to 68 percent. Actors who can afford it use proprietary models, their own training data, and specialists. The end customer merely brings a browser extension. Even if both sides are satisfied in the end, the better-equipped agent has skimmed off the largest part of the surplus value to be distributed.

Trust, just by proxy

Markets hold together because people trust each other. At least halfway. But when two parties no longer talk to each other themselves, but send their software proxies ahead, this old basis of trust disappears. Instead, something emerges that can be called "Trust by Proxy" – second-hand trust. I do not trust my negotiation partner, but a software that I do not understand, acting on behalf of another software that I understand even less.

Research now describes this as the "Fake Friend Dilemma". Conversational AI systems sound friendly, seemingly help reliably, and adapt to the tone of their user. In the background, however, they pursue goals that do not necessarily have to align with the interests of the user: monetization, data extraction, customer loyalty to a specific provider. The sociologist Shoshana Zuboff coined the term "surveillance capitalism" for such constellations: Human experience becomes a raw material. The agent as a "fake friend" gains trust in order to monetize it.

Added to this is the elegance of manipulation. Classic "dark patterns" on websites, such as hidden checkmarks and misleading buttons, are quite clumsy and well-documented by now. An AI agent, on the other hand, can work more subtly. It recognizes from the writing style that someone is stressed and, at the right moment, pushes a seemingly accommodating, in truth overpriced, offer. Research on algorithmic nudging describes how such effects are specifically applied to steer users into disadvantageous decisions. Manipulation becomes more invisible.

Unlearning without noticing

If AI systems take over all negotiations, we unlearn how to negotiate ourselves. In psychology and ergonomics, this phenomenon is called "Cognitive Offloading", and the consequence is a creeping decline of the outsourced skills. The AI makes a task easier, humans practice it less often themselves, dependence grows, competence dwindles further.

This is already evident in sales and negotiation scenarios. Employees lose the ability to retrieve details from memory, intuitively link arguments, and react adaptively to counterparts in live conversations. Emotional labor, such as de-escalating conflicts or dealing with aggressive customers, also disappears from the human repertoire when AI systems are used as buffers.

The concept of "Capability Preservation" tries to counteract this. What is demanded are systems that do not take humans completely out of the action, for example by incorporating built-in reflection steps, i.e., targeted friction points where the user must check the AI output.

Lost transparency

Previously, markets had an advantage: They generally produced visible prices and comprehensible justifications. When all actors suddenly communicate via AI agents and these agents adapt their strategies in fractions of a second, this mechanism becomes opaque. A major problem here is so-called "tacit collusion": Agreements between two participants to harm a third party.

A study by the US National Bureau of Economic Research has shown that AI-based trading agents can autonomously develop collusive strategies: without explicit agreement, without secret messages, without being programmed with anti-competitive intent. The agents simply learn through reinforcement learning that aggressive price wars harm all participants, while silent cooperation keeps the margins of all actors stable. They develop implicit punishment strategies against deviators without ever talking to each other.

This is difficult under antitrust law. Article 101 TFEU requires a concurrence of wills, i.e., a conscious understanding between competitors. In algorithmic cooperation, this understanding simply does not exist. The machines find each other without speaking to each other. How are you supposed to prosecute something like that?

The second concern relates to "Artificial Stupidity": Because many agents rely on similar foundation models or training data, they make similar mistakes in the same direction. These identical biases lead to reduced market liquidity and persistent mispricing.

Who is liable when the software makes mistakes?

If my AI agent negotiates a lease poorly, accepts an unfavorable interest rate for a loan, or overlooks a hidden clause: Who bears the damage?

Normally, the rule would be: The user is responsible, the software is their tool, so they are liable. However, this logic begins to falter the more autonomous the agents become. A US federal court, for example, applied the "Agency Theory" to a software provider in one case. The argument: If a tool provider de facto exercises decision-making authority, for instance in automated applicant screening, they can also be held directly liable, not just the employer who uses the software.

This shifts the liability question. The provider becomes a co-actor. Contractually, companies try to protect themselves with indemnification clauses and service level agreements that explicitly require human review (Human-in-the-Loop).

The fundamental problem remains: Generative AI is not deterministic. Models calculate probabilities. They produce outputs that no one foresaw. Who is liable for something that by definition was not foreseeable? Legal science tends to replace the subjective element of intent with objective behavioral standards, similar to product liability in autonomous driving. The principle would be strict liability for the entity placing the product on the market. Whoever brings an autonomous system to the market bears the risk. Regardless of whether they foresaw it or not.

Labeling, transparency, self-disclosure

The demand to label AI-mediated communication is almost a consensus. The EU AI Act provides for a transparency obligation in Article 50: Anyone interacting with an AI system must know about it. The regulation will fully enter into force in August 2026. High-risk systems like an agent that autonomously negotiates loan terms must allow human oversight (Article 14) and adhere to robust cybersecurity standards (Article 15).

So far, so good. The flaws in the transparency obligations, however, reveal themselves in the details. Even if a contract is provided with an AI label, it almost always remains unclear to the end user which specific model negotiated, which optimization goals it pursued, and which internal reward structures drive it. Proposals for standardized labels for AI models exist in research, but are watered down by lobbying and a lack of auditability.

However, a labeling obligation alone is not enough. There needs to be an obligation to disclose the model used, including the version number, documented capabilities, and independent audits.

A right to equal AI representation?

If model access determines economic success, then the question of equal representation is the logical consequence. The legal scholar Kevin T. Frazier outlined a framework in the "Modern Consumer Bill of Rights in the Age of AI" that names four core rights:

- Right to Representation: Every consumer should be able to get a verified AI agent as a proxy, ideally at standards that ensure equality of arms

- Right to Restrictions: Users must be able to set hard limits for their agent, for example budget caps, veto rights for certain clauses, or reservation of signature.

- Right to Recognize: Clear disclosure of whether the counterpart is human or machine.

- Right to Leave: Data portability so that no one is trapped in a proprietary agent ecosystem.

The right to legal representation in criminal trials took almost 200 years to become established across the board. A right to AI representation will have to come faster.

The vulnerable

In the Anthropic experiment, the losers did not realize they had lost. All of this took place in a protected setting among tech-savvy employees of an AI company. Now imagine the same mechanism with a 78-year-old pensioner who wants to renegotiate her electricity tariff. Or with a low-income earner taking out a personal loan. Or with someone renting an apartment for the first time.

AI models learn from historical data. This data contains patterns of discrimination. Research results from the European Union Agency for Fundamental Rights show that algorithms often reinforce historical disadvantages. An AI agent that recognizes that a certain user group is less assertive will exploit this weakness if it is optimized for profit maximization.

The precautionary principle from behavioral economics requires a proactive approach here. Shouldn't certain areas of life be excluded from fully autonomous agent deployment in advance?

What must not be delegated

In the research literature on the topic of "Restricted Domains", there is a growing consensus that certain sectors require human decisions, also and especially because the consequences there are severe and difficult to reverse. Medical treatment decisions belong to this. AI as an advisory and diagnostic aid makes sense, but the final therapy decision must remain in human hands. Employment contracts, insurance policies, leases, lending, and judicial proceedings are further candidates.

What the state can do

If one takes the findings so far seriously, a relatively clear list of state tasks emerges. It has four core points.

- Public AI infrastructure. Just as the state finances schools, roads, and public defenders, it should provide citizens with a competent basic negotiation agent. Berkeley researchers have concretely elaborated the concept of "Agentic AI for the Public Good". Practical examples already exist: The educational AI Khanmigo enables individualized support in overcrowded classrooms. In the United Arab Emirates, a state-supported AI named Astra Tech gives low-income migrant workers access to microloans. Such public agents weave a technological safety net that establishes equality of arms at the digital negotiating table.

- Certification and audits. Standards like ISO 42001 (the first certifiable AI management system) and the AI Risk Management Framework of the American NIST offer the first auditable metrics. These tools must become mandatory, at least for high-risk applications.

- Mandatory disclosure of model, capabilities, and optimization goals. For every contract negotiated by an AI agent, it should be standardly readable which system was active and in whose interest it optimized.

- Tax policy. If value creation continues to shift in favor of capital owners, income taxes will no longer suffice. Research therefore discusses wealth taxes on technology groups and so-called data dividends to redistribute the algorithmically generated profits.

What is likely to come

In the future, it is highly likely that a significant portion of our economic negotiations will run via software proxies. Examples are the electricity tariff, insurance contracts, perhaps even salaries.

This potentially creates more injustice. The consequence from the Anthropic experiment: The difference between a strong and a weak model is measurable, economically significant, and subjectively invisible. Anyone negotiating with a weaker agent will take home a worse deal. Without noticing it.

The answer is to design the structural conditions in such a way that access to powerful models is not determined by social background. This requires binding transparency, a right to equal AI representation, clear liability rules, protection for vulnerable groups, and a state that creates rules and provides public infrastructure.

Your Maintenance Expert in Data Centers

With decades of experience, we know what matters when it comes to maintaining your data center hardware. Benefit not only from our experience, but also from our excellent prices. Get a non-binding quote and compare for yourself.

More articles

Higher Risk of Dementia caused by using AI?

AI is taking over more and more of our tasks. Are our cognitive abilities suffering as a result, and

Nvdidia: no more invests in OpenAI or Anthropic, says CEO Jensen Huang

At a technology conference, Nvidia CEO Jensen Huang announced that his company is unlikely to make any further investments

OpenAI plans Job Cuts up to 30,000 due to AI investments

Oracle is facing massive job cuts that could affect between 20,000 and 30,000 employees company-wide. Even measured against the

Skip to content

Skip to content